Executive Summary

- Many AI models perform well in controlled testing yet break down when applied to real laboratory data, slowing R&D progress and eroding researcher trust.

- Curated scientific data produces measurably better models. CAS findings show roughly twice the prediction accuracy with half the training data in drug-protein binding studies, and more than 30% improvement in synthetic pathway prediction when scientist-verified atom mappings are used.

- Sustained AI maturity depends on three operational practices working together: data governance, workflow validation, and data sustainability.

AI continues to gain traction across scientific R&D. However, many organizations are discovering that early-stage models perform differently when applied to real-world research. Algorithms that appear reliable in controlled evaluations often exhibit inconsistent behavior when confronted with the variability, documentation practices, and experimental diversity characteristics of active research environments. This discrepancy highlights the need for greater AI maturity in R&D.

A major contributor to the difference in behavior is the underlying data structure. Experimental results depend on how measurements were generated and the way those results were documented. These details differ widely across teams, ranging from variations in instrumentation and protocols to differences in units, naming conventions, and even the level of detail recorded in lab notes. Additionally, many AI systems trained on limited, narrow slices of data cannot interpret unexpected variations from their initial, static scopes. Without proper preparation, predictions become unstable and break down during laboratory validation. These inconsistencies accumulate and create a gap between prototype performance and real-world deployment, limiting the impact of early AI efforts and setting the stage for deeper organizational challenges.

Why AI progress stalls in scientific R&D

These challenges reflect a deeper maturity gap common across many R&D organizations. Early AI models perform well in controlled test environments, but once they encounter real laboratory data or are deployed across multiple research efforts, performance declines and progress slows. Cracks emerge when models face inconsistent input data, lack the contextual grounding needed to interpret results, or utilize a divided digital infrastructure that prevents seamless data flow. These issues reveal that the bottleneck is not the model itself, but the readiness of the surrounding data, processes, and infrastructure. Understanding where this breakdown occurs helps explain why achieving AI maturity in scientific R&D remains challenging.

Variations in scientific data produce inaccurate, untrustworthy results

“Garbage In, Garbage Out” is a familiar phrase, but in scientific R&D it reflects a deeper structural challenge. Experimental results originate from instruments, laboratory teams, and documentation systems that follow different conventions for formatting, terminology, and metadata capture. Two groups may run the same assay, yet their output files can differ significantly in structure, units, contextual detail, and even naming practices. However, structural inconsistency is often secondary to a more consequential problem: experimental conditions themselves (temperature, instrument calibration, timing, etc.) can dramatically shift results, and these variables are frequently under documented or recorded inconsistently across teams. Even when numerical values look comparable, the conditions that produce them may be different. When training data carries these inconsistencies, a model cannot reliably distinguish meaningful scientific variation from reporting artifacts. Misaligned identifiers, missing metadata, and incomplete records prevent it from forming stable representations of the underlying science, thus producing a model whose learned patterns are inaccurate and whose outputs cannot be trusted.

A second, equally serious problem emerges when a trained model is put to use. Even a well-built model will break down if the data flowing into it during real-world application was prepared under different assumptions than the original training data. Differences in units, identifiers, experimental context, or file structure between training and inference pipelines cause the model to misinterpret inputs, generating predictions that are unreliable or unusable. Maintaining alignment between training data assumptions and real-world data pipelines is therefore an ongoing requirement for any AI solution deployed in active R&D environments.

Unclear reasoning reduces confidence in AI outputs

Explainability is another factor important to the adoption of AI technology. Usage of AI models declines when model behavior does not align with what researchers or leaders expect based on established laboratory results. Scientists rely on predictions and outputs that can be validated through experiments. When a prediction cannot be traced back to the structured data (in general) or a clear reasoning path, confidence in the system erodes. Without clarity on why a prediction was made, researchers hesitate to incorporate it into ongoing experiments.

The uncertainty deepens when the system does not indicate which inputs influenced its predictions/results. Slight differences in assay setup, sample attributes, or molecular descriptors can influence outcomes. Without visibility into what informed the model’s conclusion, teams cannot assess when the output is scientifically appropriate. Over time, these explainability gaps limit adoption, hinder troubleshooting, and reduce the ability of AI to reliably support or accelerate active research programs.

Fragmented infrastructure interrupts data continuity

Beyond being able to form solid reasoning pathways to connect predictions to the input, another challenge arises from fragmented digital infrastructure. Many research groups rely on software adopted at different stages of their digital transition, resulting in data and algorithms being spread across isolated platforms. Experimental records may reside in one environment, while analytical outputs or proprietary references are stored in another location.

These divisions disrupt data continuity, making it difficult to trace how a model produced its output or how that output relates to specific laboratory conditions. When systems cannot exchange data reliably, teams are forced to transfer information manually by copying files, exporting results, or reconstructing records across platforms. These manual transfers increase the risk of errors and weaken confidence in predictions that depend on the information moved through fragmented workflows. Over time, the lack of end‑to‑end integration limits reproducibility, slows iteration, and constrains AI effectiveness within active research programs.

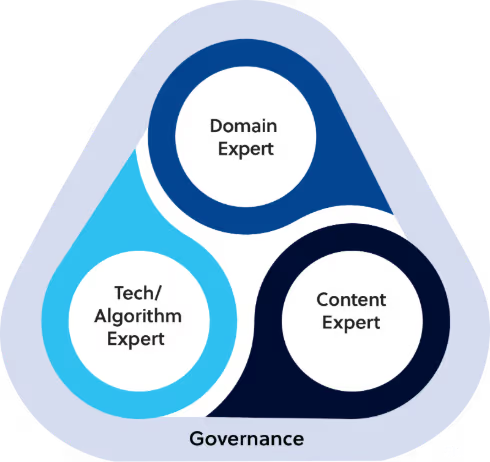

The Triangle of Success framework for scientific R&D

To address these challenges, CAS utilizes the Triangle of Success as a structured partnership framework tailored for scientific R&D. This model explains how coordinated scientific review, intentional technical design, and structured knowledge management create the conditions required for stable model behavior. By aligning expertise across these three pillars, organizations can systematically strengthen their data, infrastructure, and scientific foundations to accelerate their progress toward higher AI maturity.

The Triangle of Success applies at two critical stages of the AI lifecycle. The first is model creation: ensuring the prediction problem is framed correctly and scientifically, that the training data carries the right context and quality, and the AI approach is well-matched to the use case. The second is model application: once a model has been trained and validated, the framework guides how it is deployed into real-world research workflows by verifying that the original assumptions still hold, incoming data continues to meet the conditions the model was built on, and outputs remain trustworthy as research environments evolve. Together, these two applications of the framework ensure that AI capability is built and used for purpose.

The framework highlights the distinct yet interdependent contributions of each group: domain experts interpret experimental variability, content experts standardize and stabilize the data, and technology experts unify the digital infrastructure ecosystem. Together, they provide the stability and scientific foundation required for AI to reliably influence R&D decisions.

Domain expert

Domain experts within an organization apply scientific knowledge and real-world research experience to assess whether an AI model accurately behaves. Core responsibilities include:

- Clarifying which experimental factors influence a result and how they should be reflected in the data.

- Setting expectations for what the model should learn and where scientific constraints apply.

- Checking whether outputs are consistent with established scientific understanding rather than artifacts in the data.

Content expert

Content experts, such as CAS specialists/experts, ensure that the scientific information entering an AI system is accurate and verified, and that each input reflects established relationships rather than inconsistent or incomplete records. They provide guidance by:

- Preparing datasets that reflect validated relationships spanning chemical, biological, and materials science.

- Resolving conflicting identifiers, missing metadata, inconsistent terminology, or other issues that undermine model stability.

- Adding context from verified scientific sources so that the input data represents what is observed in real R&D environments.

- Maintaining content systems that preserve provenance and keep datasets aligned with current scientific knowledge as the field evolves.

Technology and algorithm expert

Technology and algorithm specialists create infrastructure that allows AI systems to function reliably across research programs. They support AI development by:

- Building systems that automate data movement through pipelines, eliminating the need for manual work and reducing error rates.

- Monitoring how models behave when new data updates the system and adjusting workflows when performance changes.

- Integrating predictions into existing research tools so that teams can use them during routine decision-making.

Why better data leads to better predictive models

AI maturity in scientific R&D depends on the quality and consistency of the data used to train each model. When the Triangle of Success is applied in practice, its impact is most visible in the quality of the training data and the performance that follows. Curated inputs provide models with a clearer view of the relationships they are intended to learn, thereby reducing noise that can limit their reliability in real-world research settings. CAS findings demonstrate that stronger data foundations produce more stable and accurate model behavior.

In one drug–protein binding prediction study, models trained on scientist-curated CAS data delivered roughly twice the prediction accuracy while using 50% fewer data points. This improvement reduced unnecessary experimental follow-up and helped teams prioritize the most plausible binding candidates earlier in their workflow.*

A separate evaluation of synthetic pathway prediction showed similar gains. When scientist-verified atom mapping was added to the training data, pathway accuracy increased by more than 30%. The verified mappings resolved structural ambiguities that had previously misdirected the model, leading to predictions that more closely matched laboratory observations and required fewer corrective iterations.**

These findings demonstrate that AI models perform more effectively when trained on harmonized scientific data that has been expertly reviewed. Achieving these gains consistently requires more than one‑off improvements. It depends on operational practices that reinforce maturity over time.

Operational steps that strengthen AI maturity

AI maturity increases when an organization’s AI and data strategy begins to inform everyday R&D decisions, from how teams prepare data to how they use model predictions in projects. Progress depends on building systems that adapt to research needs and maintain a clear link between the data that enters a workflow and the projections that emerge from it.

Three operational practices help teams move from early pilots to more mature, reliable AI-enabled workflows.

Data governance

- Sets rules for how data should be prepared before entering a workflow

- Defines checks that confirm whether inputs and predictions meet scientific expectations

- Maintains documentation of dataset changes, model updates, and evaluation steps

- Assigns responsibility for reviewing data and approving workflow updates

Workflow validation

- Stores all steps used to generate a prediction, including preprocessing and model settings, so results can be traced and repeated

- Confirms stability through repeat runs performed by different teams or at other times

- Brings scientific and technical staff together to review and resolve discrepancies

- Sets clear criteria for when a workflow is stable enough to support additional projects

Data sustainability

- Adds new, well-curated data through controlled intake processes to keep models aligned with current scientific knowledge

- Applies standardized formatting, metadata, and context rules to every new dataset before it enters a workflow

- Uses retrieval-augmented methods, allowing models to reference up-to-date scientific sources during inference

- Monitors shifts in data patterns or assumptions and updates datasets or models to prevent prediction quality decline

When data governance, workflow validation, and data sustainability are maintained across workflows, AI becomes more reliable and supports advancement toward higher maturity.

How AI maturity strengthens scientific workflows and outcomes

AI maturity determines whether scientific organizations can rely on model outputs for decisions that shape experiments and influence program direction. Many teams see strong performance in controlled evaluations, yet reliability drops once models operate in real R&D settings where measurement variation and workflow differences affect behavior.

Progress toward higher maturity depends on strengthening the scientific foundation beneath each model. Domain experts clarify the research assumptions and scientific constraints the system must follow, ensuring the model’s reasoning aligns with established knowledge. Technology leads maintain the digital environments that preserve reproducibility, track data lineage, and keep outputs traceable as data evolves. Content experts supply harmonized, verified inputs that reflect real-world behavior and eliminate inconsistencies that destabilize learning.

When these capabilities align, AI systems perform more consistently under scientific scrutiny and can support day-to-day decisions with greater confidence.

To learn how CAS can support the next steps in your AI journey, explore these Science-smart AI resources.

- - -

* CAS case study showcasing the impact of high-quality, scientist-curated content on the predictive accuracy for the biological activity of a ligand against a protein target. By using CAS-curated content instead of noisy public data, the model increased 2x in prediction accuracy while using a training data set that was half the size of the original.

** Case study developed in collaboration with Molecule.One. A retrosynthesis model trained on public reactions data was re-trained using CAS data, leveraging the scientist-curated mappings between reactant and product atoms that are critical to accurate chemical predictions. With CAS data, accurate prediction of valid synthetic routes increased by 30%, as rated by expert synthetic chemists.

Questions and answers

Why does data quality matter for AI?

Data quality matters for AI because experimental results vary widely in how they are generated, formatted, and documented. Two teams running the same analysis can produce data using different units, identifiers, and metadata. When training data carries these inconsistencies, models cannot tell meaningful scientific variation from reporting artifacts, and predictions become unreliable. Clean, harmonized data gives models a clearer view of the relationships they are meant to learn.

What is data governance for AI?

Data governance for AI is the set of rules and responsibilities that determine how data is prepared, checked, and tracked before it enters a model. It defines formatting standards, validation checks, and documentation requirements for every dataset. Strong data governance also assigns clear ownership for reviewing inputs, approving workflow changes, and confirming that predictions meet scientific expectations.

How do you start an AI initiative in R&D?

Starting an AI initiative in R&D begins with three foundational steps. First, frame the scientific question clearly so the model has a defined problem to solve. Second, audit the underlying data for consistency in units, identifiers, metadata, and experimental context, and curate it before training. Third, assemble the right mix of expertise: domain scientists, content specialists, and technology leads.

.avif)