Executive Summary

- Data quality is the make-or-break factor for AI in science.

- Data integrity and data harmonization are human-led disciplines, and not purely automated.

- Harmonized data produces measurable improvements in AI model performance.

- "Dark data" represents a significant untapped resource for AI-driven research.

- AIadoption across scientific fields is accelerating rapidly, but data infrastructure remains the limiting factor.

There is no doubt that artificial intelligence (AI) has revolutionized scientific inquiry with its ability to analyze incredibly vast datasets. AI’s capacity for data and its computational speed surpass what humans are capable of, and the technology will continue to redefine what is possible in scientific discovery.

Yet there is also no question that the concept “garbage in, garbage out” is true — AI algorithms and models can only produce outcomes as trustworthy as the data that powers them. The problem of quality data is as old as science itself — no hypothesis can be repeatedly validated if it’s based on faulty data — but the establishment of AI-driven inquiry along with the sheer volume of data available today makes this issue exponentially more challenging.

A big part of the solution is data harmonization, which provides ensemble models, large language models (LLMs), and other types of AI systems with correct and consistent data. Quality data makes the difference, for example, in successful AI use cases across biomedicine and materials science. These are two fields where data complexity—in protein structures, atomic structures, DNA, and more—underscores the necessity of clean, harmonized data for AI models.

Our curation of the CAS Content CollectionTM, the largest human-curated repository of scientific information, gives us unparalleled insights into data harmonization best practices. Let’s explore how it works and examine the often-overlooked role of human expertise when driving successful AI-powered discoveries:

AI models for chemistry: today’s landscape and what’s on the horizon

AI drives faster drug discovery and the identification of novel materials. We’re seeing a proliferation of model types in both biomedicine and materials science, and by performing concept co-occurrence analysis, researchers can spot emerging trends in modeling approaches. This in-depth look at the specific tools and techniques for applying AI to life science and materials science reveals where AI technology is making an impact now, and where it’s going next:

Data integrity and harmonization: the foundation of building AI success

Leveraging AI for scientific inquiry requires clean, standardized data. Error correction and workflow orchestration are critical steps to building the necessary data foundation, but human expertise remains a key part of these efforts by standardizing and validating data inputs. We see tangible benefits in predictive models and advanced analytics when using harmonized data — a reminder that people are still a vital part of leveraging the most advanced technologies.

AI in pharma: leading efforts in drug repurposing

Pharma researchers have access to vast amounts of data about drug formulations, archived clinical trials, and electronic health records, to mention only a few sources. Leveraging this data properly can reveal important insights for repurposing existing drugs, which is a powerful strategy for addressing patient needs. How can they do it? With a knowledge management solution that results in clean, standardized data to power AI tools effectively.

Having a wealth of diverse data can significantly enhance the potential of AI in pharmaceutical development and accelerate your repurposing pipeline. However, more data does not necessarily mean better data.

Predictive models: accelerating drug discovery with data quality

Predicting ligand-to-target activity or metabolite profiles are critical outputs of modeling in drug discovery, but getting trustworthy results requires extensive data harmonization. This conversation between CAS scientists explains why human data curation plays such an important role in preparing the information that drives predictive modeling. Find out what are some of the key challenges in model development, and how CAS addresses continual model training to keep our solutions up to date.

White Paper

Five knowledge management strategies for your R&D workflow

Researchers understand the importance of breaking down data siloes and ensuring scientific information is clean and consistent. But what are the best practices for implementing these steps? In this whitepaper, we share five knowledge management tips to improve scientific R&D workflows. Download the white paper today:

What’s next in data harmonization

No longer a far-off innovation, AI-powered solutions are a central part of scientific discovery today. AI models can identify novel compounds and materials, spot drug repurposing opportunities, and analyze the extraordinary amount of data available across scientific disciplines. But these models require clean, standardized data to generate quality insights, and getting to that state relies on human expertise as much as technological capacity.

Scientific data exists in tables, diagrams, and supplementary materials from publications. Research organizations have all sorts of “dark data” that is unstructured but contains valuable insights. By collaborating with data experts and building a strong foundation of harmonized data, researchers can fully utilize all the information they have and derive meaningful benefits from AI models and algorithms.

Questions and answers

Q: What is data harmonization?

A: Data harmonization is the process of integrating information from multiple sources and standardizing it into a cohesive framework so that it can be reliably analyzed, compared, and shared. In the context of AI, it matters because models trained on inconsistent or fragmented data will inherit those inconsistencies in their outputs.

Q: Why can't AI automate data harmonization?

A: AI and machine learning tools can help process large volumes of data, but they lack the scientific rigor and judgment needed to resolve the kinds of ambiguities that often appear in lab notes, journal articles, academic papers, and patents. While large language models can differentiate concepts based on everyday use, a single protein might be referenced using dozens of different names, translations, and identifiers that are only apparent to experts in their respective fields.

Q: How does data harmonization improve predictive models for science?

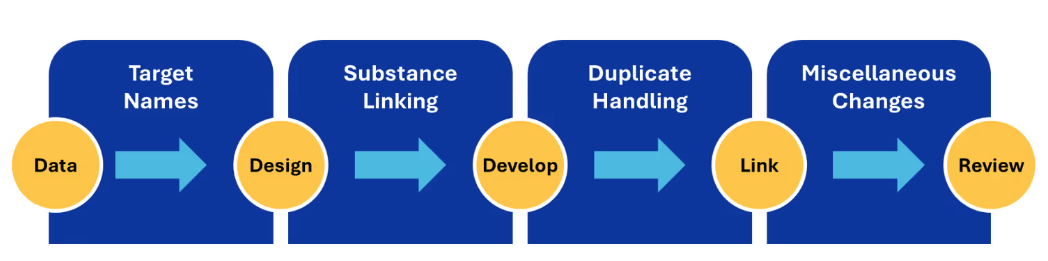

A: By normalizing target names and improving substance linking a test model's accuracy improved significantly. Predictions became more consistent with experimental results, and the ability to identify the most promising drug candidates earlier in the screening process improved accordingly.

Q: What is "dark data," and how does it affect AI-driven drug repurposing?

A: Dark data refers to the unstructured information that organizations accumulate over time but never effectively leverage. When dark data is properly harmonized and made accessible, it can substantially enhance the diversity and reliability of AI training datasets, improving the quality of repurposing predictions and reducing the risk of biased or misleading results.

Q: What types of AI models are most commonly applied in science research today?

A: Large language models (LLMs) are increasingly used for knowledge extraction, hypothesis generation, and generative design. Classical methods like Random Forest and Support Vector Machines remain common for tasks like biomarker discovery and cancer subtype identification, especially when training data is limited, though deep learning has displaced them in high-data settings. Graph Neural Networks and transformer-based models have surged since 2020 — the former for protein structure and interaction prediction, the latter for biomedical literature mining and sequence analysis. In materials science, classical machine learning dominates property prediction, while CNNs are applied to microstructural image analysis and neural network-based surrogate models support simulation-heavy workflows.

Q: Why do ensemble models outperform single-model approaches?

A: No single model captures the full complexity of any scientific data landscape. Ensemble approaches combine multiple models to generate a "consensus prediction" that is generally more reliable than what any individual model could produce alone.

Links

More Resources

Organizations facing data harmonization challenges, whether digitizing legacy records, normalizing compound libraries, or preparing datasets for AI modeling, can discuss custom data solutions designed around specific scientific domains and existing infrastructure.

Further reading

- Establishing new standards for AI prediction accuracy with custom training data

- Turn untapped pharma data into R&D.

- Custom-curated machine learning training dataset accelerates optimization of organic synthesis workflow

- How ensemble AI models deliver better scientific results

- Six examples that demonstrate how AI is helping power cutting-edge science research

.avif)